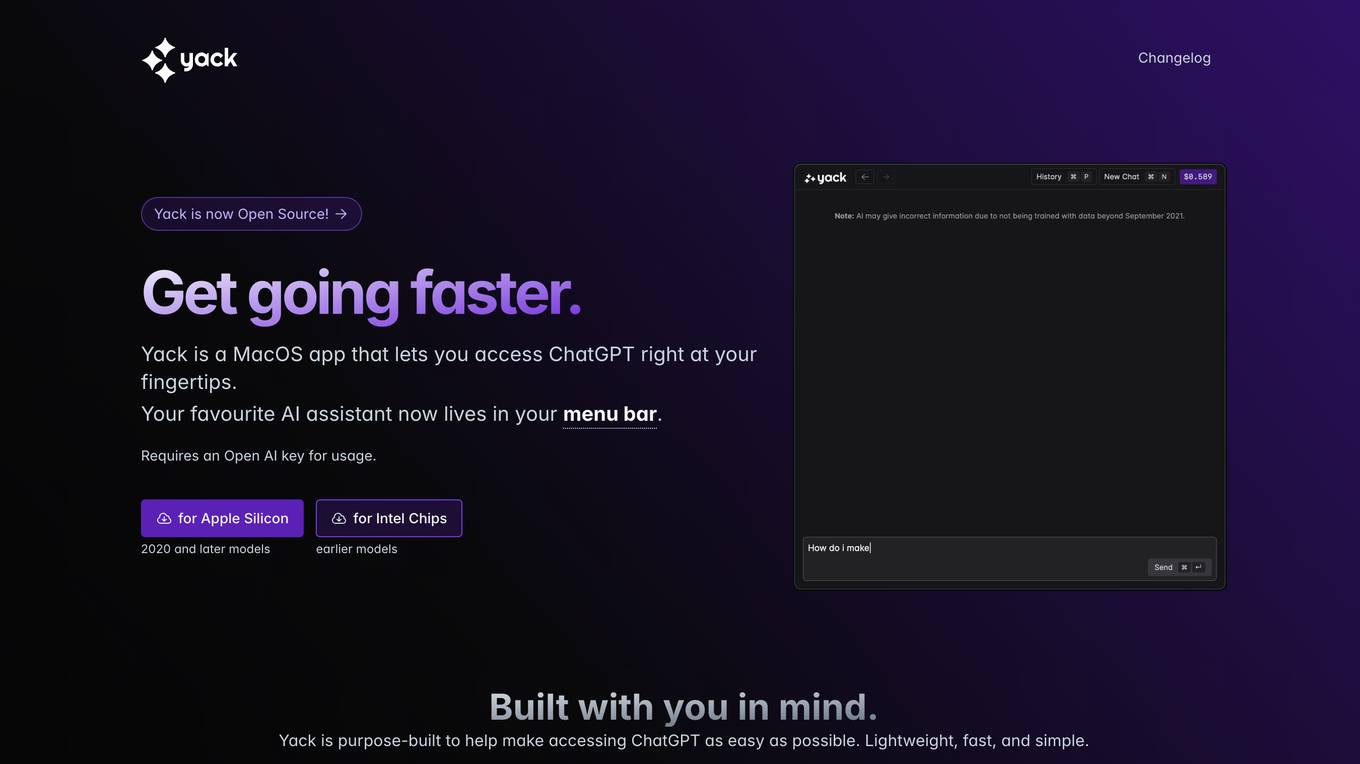

AI tools for Rust AI tools

Related Tools:

Warp

Warp is a terminal reimagined with AI and collaborative tools for better productivity. It is built with Rust for speed and has an intuitive interface. Warp includes features such as modern editing, command generation, reusable workflows, and Warp Drive. Warp AI allows users to ask questions about programming and get answers, recall commands, and debug errors. Warp Drive helps users organize hard-to-remember commands and share them with their team. Warp is a private and secure application that is trusted by hundreds of thousands of professional developers.

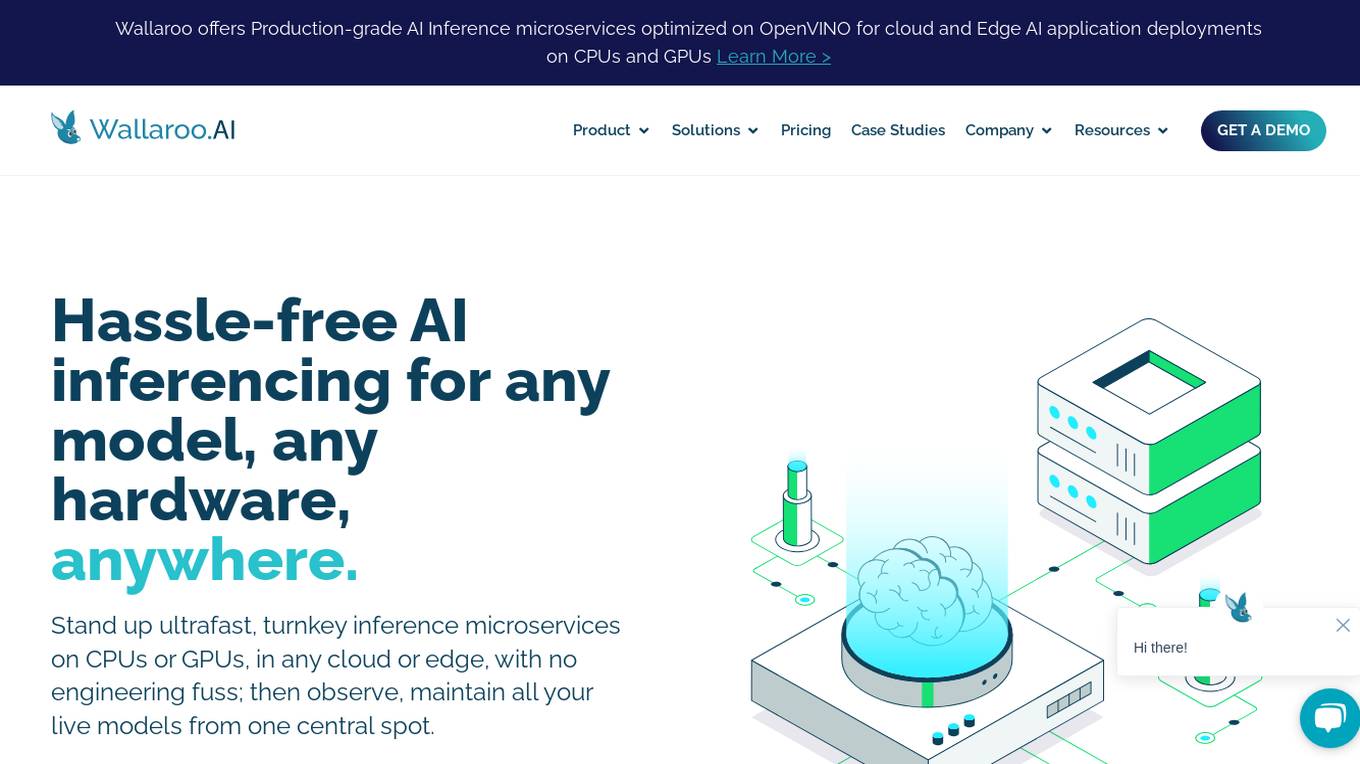

Wallaroo.AI

Wallaroo.AI is an AI inference platform that offers production-grade AI inference microservices optimized on OpenVINO for cloud and Edge AI application deployments on CPUs and GPUs. It provides hassle-free AI inferencing for any model, any hardware, anywhere, with ultrafast turnkey inference microservices. The platform enables users to deploy, manage, observe, and scale AI models effortlessly, reducing deployment costs and time-to-value significantly.

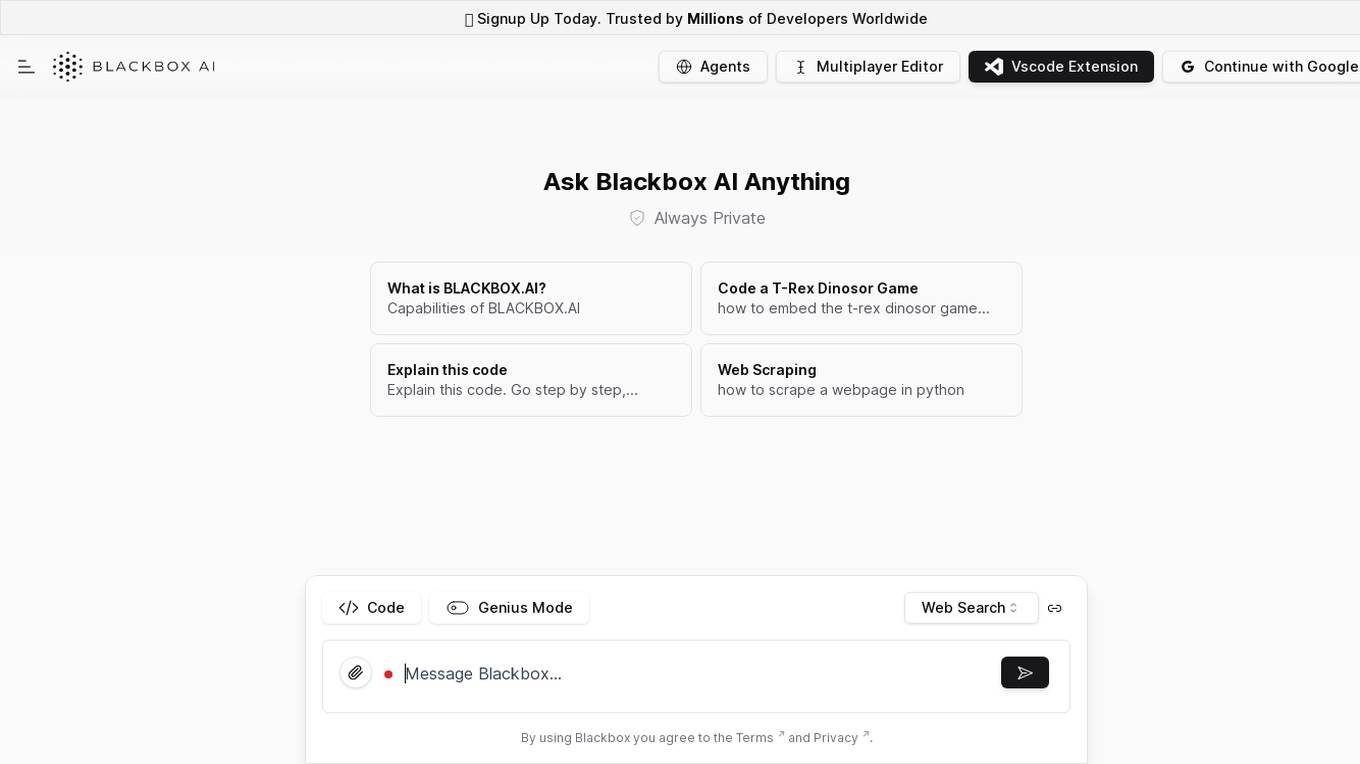

Chat Blackbox

Chat Blackbox is an AI tool that specializes in AI code generation, code chat, and code search. It provides a platform where users can interact with AI to generate code, discuss code-related topics, and search for specific code snippets. The tool leverages artificial intelligence algorithms to enhance the coding experience and streamline the development process. With Chat Blackbox, users can access a wide range of features to improve their coding skills and efficiency.

CodeDefender α

CodeDefender α is an AI-powered tool that helps developers and non-developers improve code quality and security. It integrates with popular IDEs like Visual Studio, VS Code, and IntelliJ, providing real-time code analysis and suggestions. CodeDefender supports multiple programming languages, including C/C++, C#, Java, Python, and Rust. It can detect a wide range of code issues, including security vulnerabilities, performance bottlenecks, and correctness errors. Additionally, CodeDefender offers features like custom prompts, multiple models, and workspace/solution understanding to enhance code comprehension and knowledge sharing within teams.

Blackbox

Blackbox is an AI-powered code generation, code chat, and code search tool that helps developers write better code faster. With Blackbox, you can generate code snippets, chat with an AI assistant about code, and search for code examples from a massive database.

AI Code Translator

AI Code Translator is an online tool that allows users to translate code or natural language into multiple programming languages. It is powered by artificial intelligence (AI) and provides intelligent and efficient code translation. With AI Code Translator, developers can save time and effort by quickly converting code between different languages, optimizing their development process.

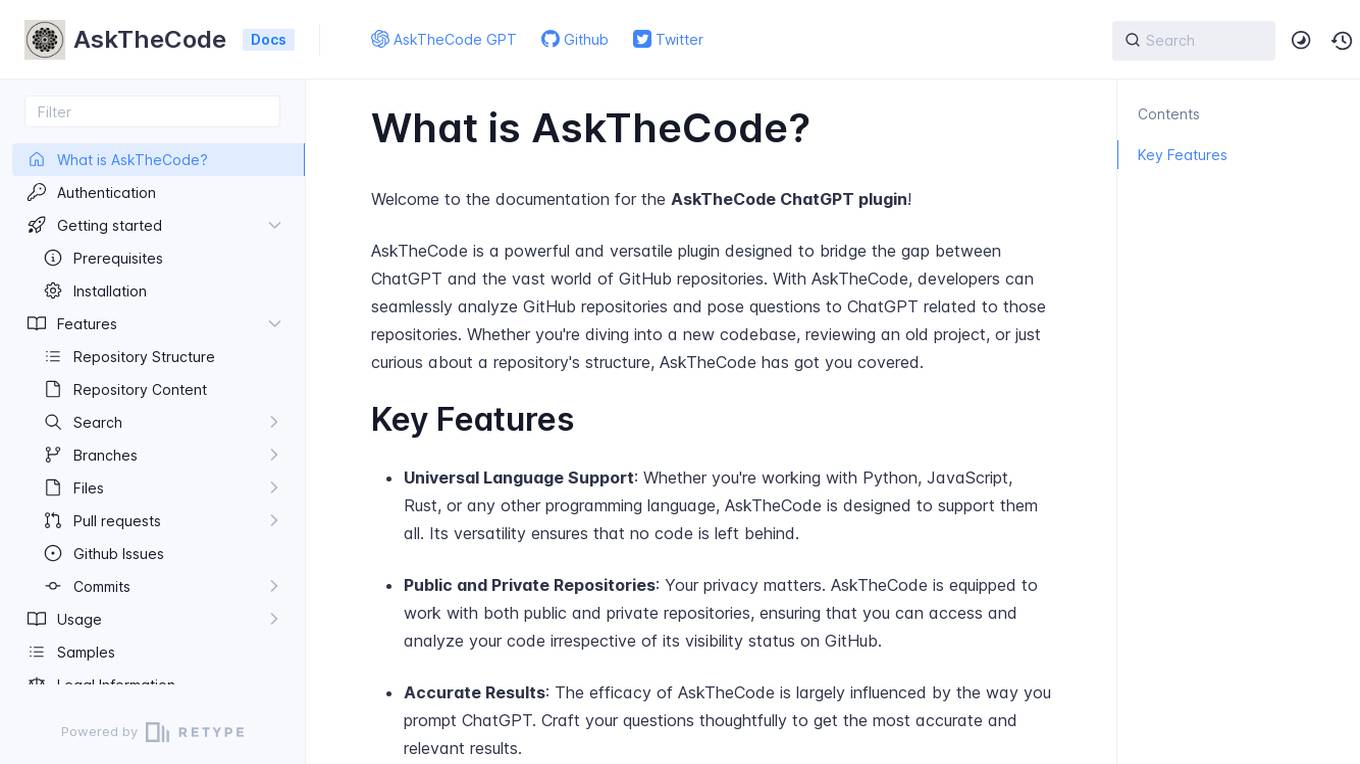

AskTheCode

AskTheCode is a powerful and versatile plugin designed to bridge the gap between ChatGPT and GitHub repositories. It allows developers to seamlessly analyze GitHub repositories and ask questions related to those repositories using ChatGPT. The tool supports universal language, works with both public and private repositories, and provides accurate results based on thoughtful prompts. AskTheCode aims to assist developers in exploring and understanding codebases, projects, and repository structures.

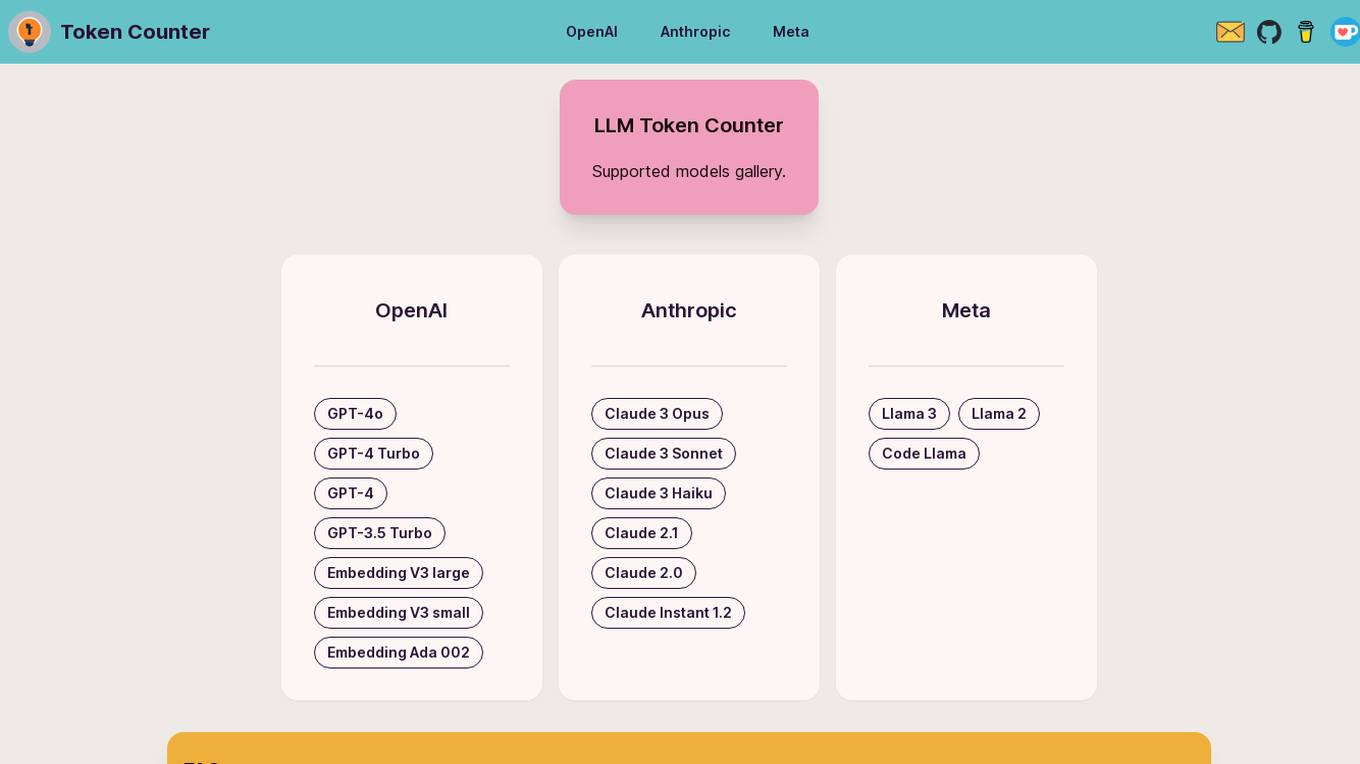

LLM Token Counter

The LLM Token Counter is a sophisticated tool designed to help users effectively manage token limits for various Language Models (LLMs) like GPT-3.5, GPT-4, Claude-3, Llama-3, and more. It utilizes Transformers.js, a JavaScript implementation of the Hugging Face Transformers library, to calculate token counts client-side. The tool ensures data privacy by not transmitting prompts to external servers.

N/A

The website is currently under maintenance, which means that it is temporarily unavailable for access or use. During this period, users may experience disruptions in service or encounter error messages when trying to visit the site. Maintenance is essential for ensuring the website's functionality, security, and performance. It involves updates, repairs, and optimizations to keep the site running smoothly. Please check back later for the website to be fully operational.

Replit

Replit is a software creation platform that provides an integrated development environment (IDE), artificial intelligence (AI) assistance, and deployment services. It allows users to build, test, and deploy software projects directly from their browser, without the need for local setup or configuration. Replit offers real-time collaboration, code generation, debugging, and autocompletion features powered by AI. It supports multiple programming languages and frameworks, making it suitable for a wide range of development projects.

Local AI Playground

Local AI Playground is a free and open-source native app designed for AI management, verification, and inferencing. It allows users to experiment with AI offline in a private environment without the need for a GPU. The application is memory-efficient and compact, with a Rust backend, making it suitable for various operating systems. It offers features such as CPU inferencing, model management, and digest verification. Users can start a local streaming server for AI inferencing with just two clicks. Local AI Playground aims to simplify the AI development process and provide a user-friendly experience for both offline and online AI applications.

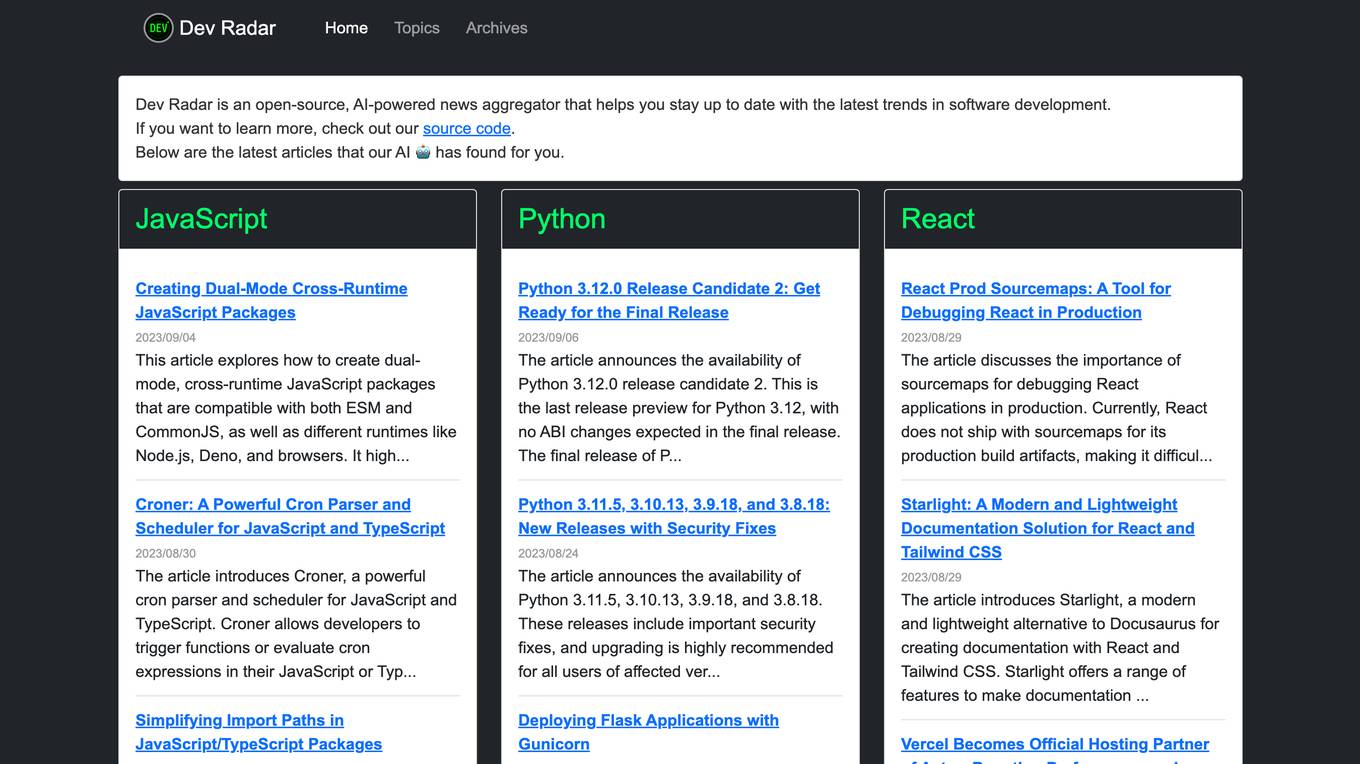

Dev Radar

Dev Radar is an open-source, AI-powered news aggregator that helps users stay up to date with the latest trends in software development. It provides curated articles on various programming languages and frameworks, offering insights and updates for developers. Users can explore a wide range of topics related to JavaScript, Python, React, TypeScript, Rust, Go, Node.js, Deno, Ruby, and more. Dev Radar aims to streamline the process of discovering relevant and valuable content in the ever-evolving field of software development.

Rust

Powerful Rust coding assistant. Trained on a vast array of the best up-to-date Rust resources, libraries and frameworks. Start with a quest! 🥷 (V1.7)

RustChat

Hello! I'm your Rust language learning and practical assistant created by AlexZhang. I can help you learn and practice Rust whether you are a beginner or professional. I can provide suitable learning resources and hands-on projects for you. You can view all supported shortcut commands with /list.

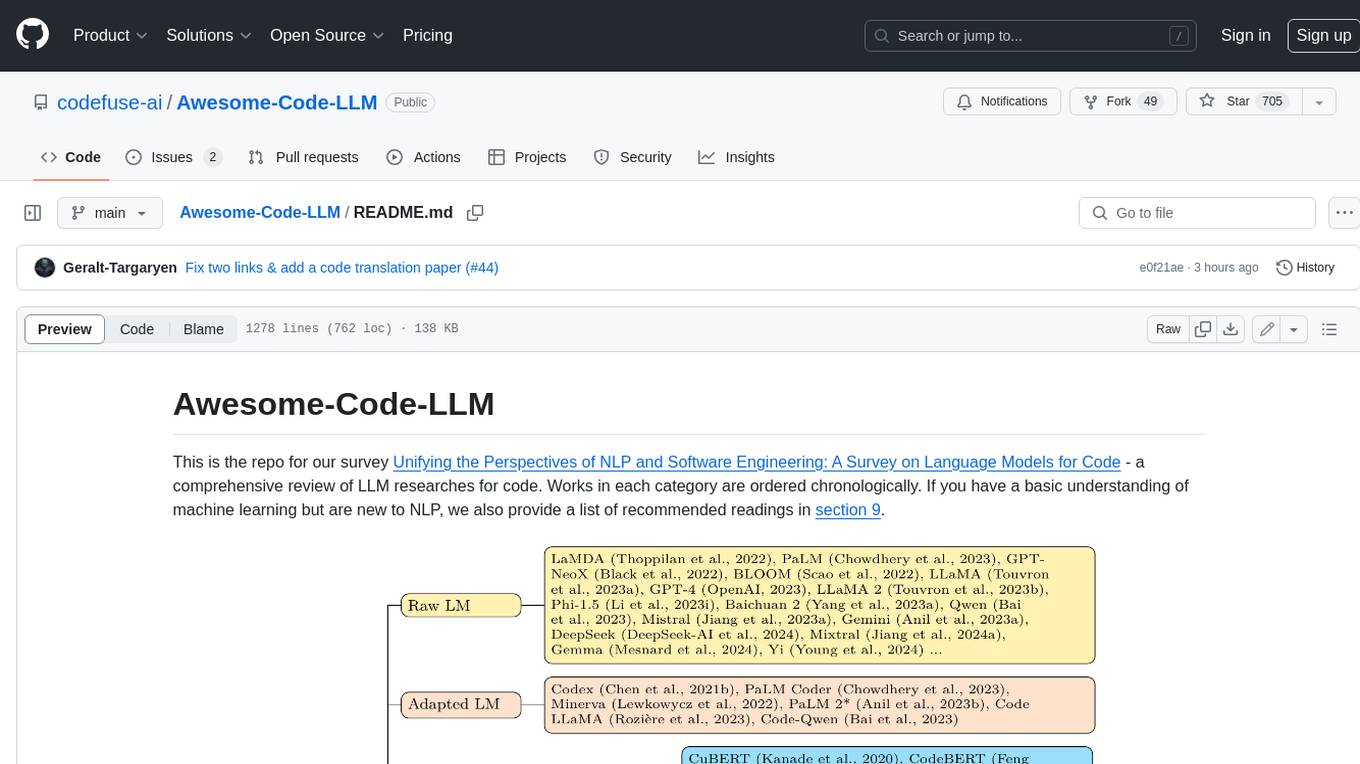

Awesome-Code-LLM

Analyze the following text from a github repository (name and readme text at end) . Then, generate a JSON object with the following keys and provide the corresponding information for each key, in lowercase letters: 'description' (detailed description of the repo, must be less than 400 words,Ensure that no line breaks and quotation marks.),'for_jobs' (List 5 jobs suitable for this tool,in lowercase letters), 'ai_keywords' (keywords of the tool,user may use those keyword to find the tool,in lowercase letters), 'for_tasks' (list of 5 specific tasks user can use this tool to do,in lowercase letters), 'answer' (in english languages)

screeps-starter-rust

screeps-starter-rust is a Rust AI starter kit for Screeps: World, a JavaScript-based MMO game. It utilizes the screeps-game-api bindings from the rustyscreeps organization and wasm-pack for building Rust code to WebAssembly. The example includes Rollup for bundling javascript, Babel for transpiling code, and screeps-api Node.js package for deployment. Users can refer to the Rust version of game APIs documentation at https://docs.rs/screeps-game-api/. The tool supports most crates on crates.io, except those interacting with OS APIs.

coding-with-ai

Coding-with-ai is a curated collection of techniques and best practices for utilizing AI coding tools to achieve transformative results in coding projects. It bridges the gap between AI coding demos and daily coding reality by providing insights into specific patterns like memory files, test-driven regeneration, and parallel AI sessions. The repository offers guidance on setting up memory files, writing detailed specs, drafting solutions before using assistants, getting multiple options, choosing stable libraries, and triggering careful planning. It also covers UI prototyping, coding practices, debugging strategies, testing methodologies, and cross-stage techniques for efficient coding with AI tools.

langchain-rust

LangChain Rust is a library for building applications with Large Language Models (LLMs) through composability. It provides a set of tools and components that can be used to create conversational agents, document loaders, and other applications that leverage LLMs. LangChain Rust supports a variety of LLMs, including OpenAI, Azure OpenAI, Ollama, and Anthropic Claude. It also supports a variety of embeddings, vector stores, and document loaders. LangChain Rust is designed to be easy to use and extensible, making it a great choice for developers who want to build applications with LLMs.

awesome-AI-driven-development

Awesome AI-Driven Development is a curated list of tools, frameworks, and resources for AI-driven development. It includes AI code editors, terminal-based coding agents, IDE plugins & extensions, multi-agent systems, code generation & templates, testing & quality assurance tools, Model Context Protocol implementations, pull request & code review tools, project management & documentation tools, language models for code, development workflows tools, code search & analysis tools, specialized tools for Git & version control, cloud & DevOps, language-specific tasks, terminal & shell utilities, prompt & context management tools, Copilot extensions & alternatives, learning & tutorials resources, and configuration & enhancement tools for AI coding assistants.

AI-Studio

MindWork AI Studio is a desktop application that provides a unified chat interface for Large Language Models (LLMs). It is free to use for personal and commercial purposes, offers independence in choosing LLM providers, provides unrestricted usage through the providers API, and is cost-effective with pay-as-you-go pricing. The app prioritizes privacy, flexibility, minimal storage and memory usage, and low impact on system resources. Users can support the project through monthly contributions or one-time donations, with opportunities for companies to sponsor the project for public relations and marketing benefits. Planned features include support for more LLM providers, system prompts integration, text replacement for privacy, and advanced interactions tailored for various use cases.

fenic

fenic is an opinionated DataFrame framework from typedef.ai for building AI and agentic applications. It transforms unstructured and structured data into insights using familiar DataFrame operations enhanced with semantic intelligence. With support for markdown, transcripts, and semantic operators, plus efficient batch inference across various model providers. fenic is purpose-built for LLM inference, providing a query engine designed for AI workloads, semantic operators as first-class citizens, native unstructured data support, production-ready infrastructure, and a familiar DataFrame API.

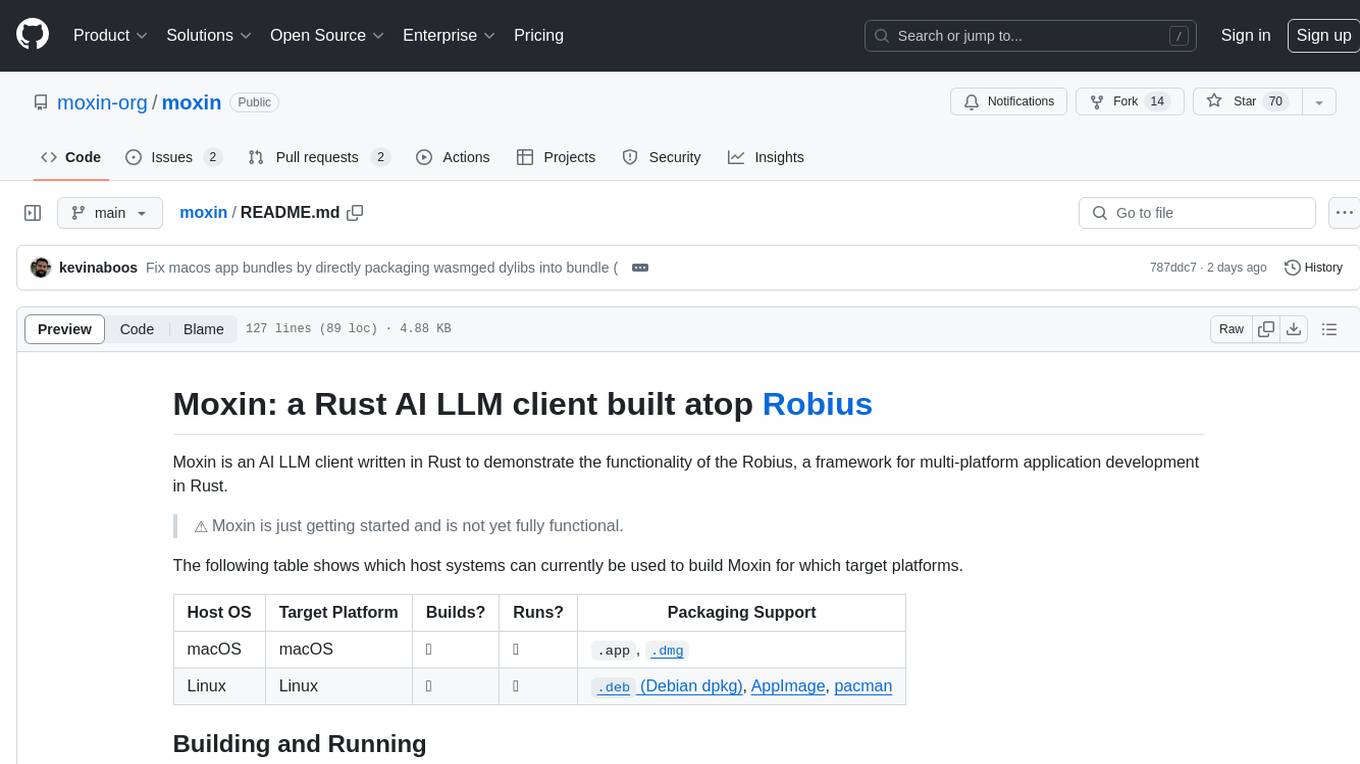

moxin

Moxin is an AI LLM client written in Rust to demonstrate the functionality of the Robius framework for multi-platform application development. It is currently in early stages of development and not fully functional. The tool supports building and running on macOS and Linux systems, with packaging options available for distribution. Users can install the required WasmEdge WASM runtime and dependencies to build and run Moxin. Packaging for distribution includes generating `.deb` Debian packages, AppImage, and pacman installation packages for Linux, as well as `.app` bundles and `.dmg` disk images for macOS. The macOS app is not signed, leading to a warning on installation, which can be resolved by removing the quarantine attribute from the installed app.

AITreasureBox

AITreasureBox is a comprehensive collection of AI tools and resources designed to simplify and accelerate the development of AI projects. It provides a wide range of pre-trained models, datasets, and utilities that can be easily integrated into various AI applications. With AITreasureBox, developers can quickly prototype, test, and deploy AI solutions without having to build everything from scratch. Whether you are working on computer vision, natural language processing, or reinforcement learning projects, AITreasureBox has something to offer for everyone. The repository is regularly updated with new tools and resources to keep up with the latest advancements in the field of artificial intelligence.

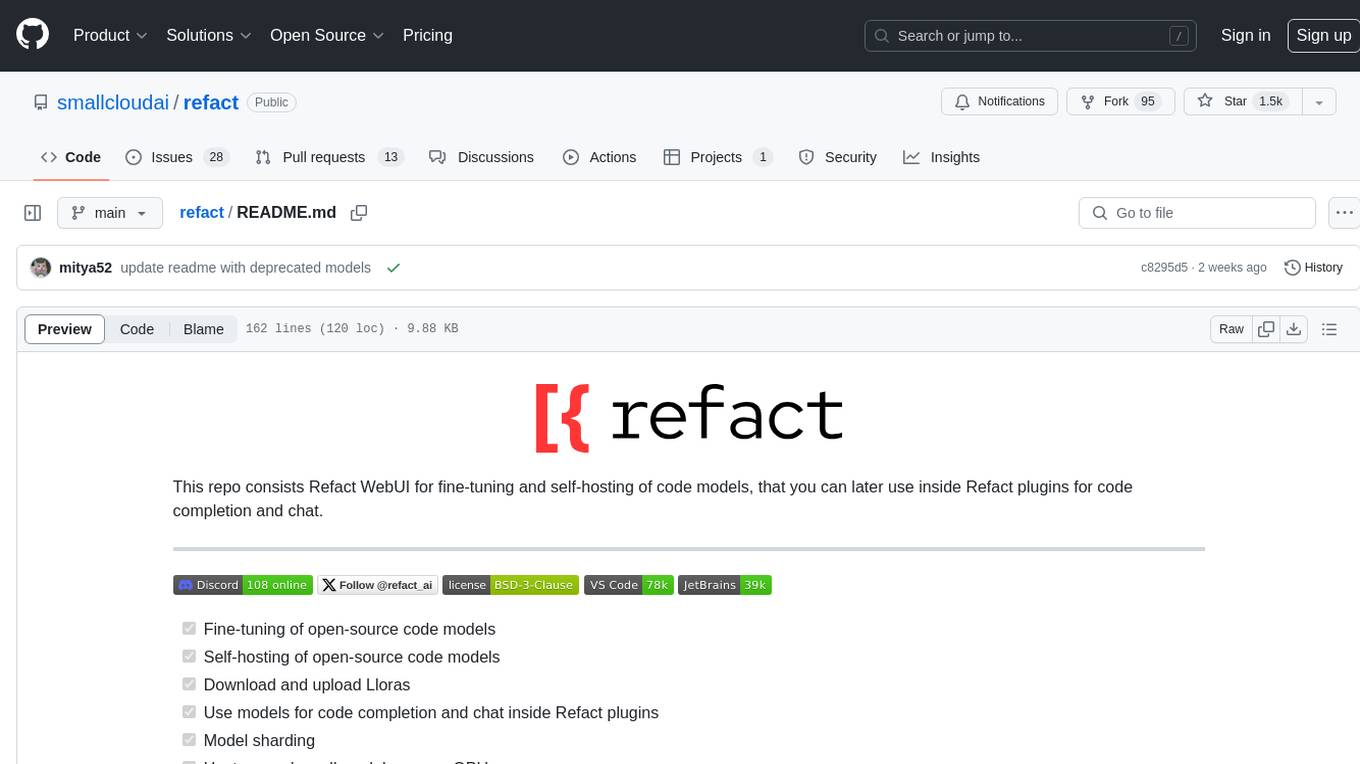

refact

This repository contains Refact WebUI for fine-tuning and self-hosting of code models, which can be used inside Refact plugins for code completion and chat. Users can fine-tune open-source code models, self-host them, download and upload Lloras, use models for code completion and chat inside Refact plugins, shard models, host multiple small models on one GPU, and connect GPT-models for chat using OpenAI and Anthropic keys. The repository provides a Docker container for running the self-hosted server and supports various models for completion, chat, and fine-tuning. Refact is free for individuals and small teams under the BSD-3-Clause license, with custom installation options available for GPU support. The community and support include contributing guidelines, GitHub issues for bugs, a community forum, Discord for chatting, and Twitter for product news and updates.

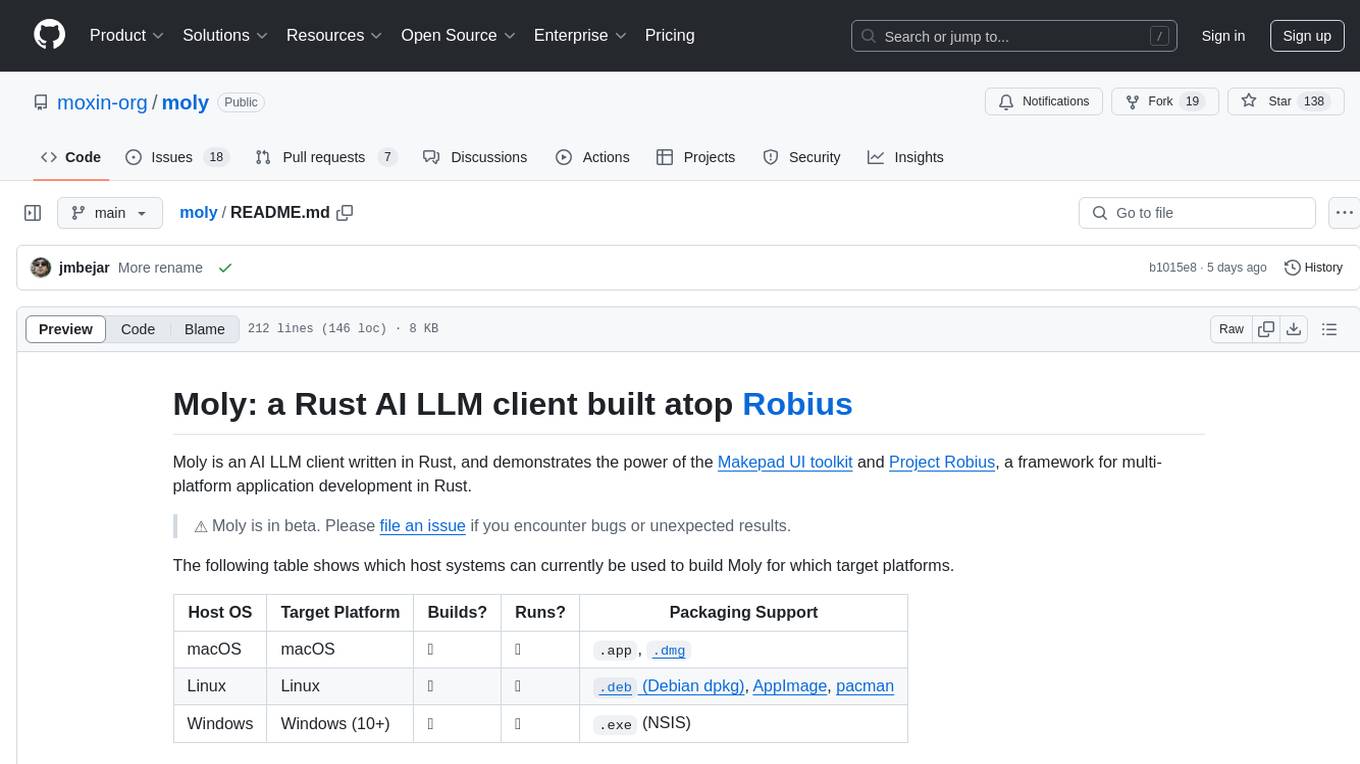

moly

Moly is an AI LLM client written in Rust, showcasing the capabilities of the Makepad UI toolkit and Project Robius, a framework for multi-platform application development in Rust. It is currently in beta, allowing users to build and run Moly on macOS, Linux, and Windows. The tool provides packaging support for different platforms, such as `.app`, `.dmg`, `.deb`, AppImage, pacman, and `.exe` (NSIS). Users can easily set up WasmEdge using `moly-runner` and leverage `cargo` commands to build and run Moly. Additionally, Moly offers pre-built releases for download and supports packaging for distribution on Linux, Windows, and macOS.

llms-txt-hub

The llms.txt hub is a centralized repository for llms.txt implementations and resources, facilitating interactions between LLM-powered tools and services with documentation and codebases. It standardizes documentation access, enhances AI model interpretation, improves AI response accuracy, and sets boundaries for AI content interaction across various projects and platforms.

shimmy

Shimmy is a 5.1MB single-binary local inference server providing OpenAI-compatible endpoints for GGUF models. It offers fast, reliable AI inference with sub-second responses, zero configuration, and automatic port management. Perfect for developers seeking privacy, cost-effectiveness, speed, and easy integration with popular tools like VSCode and Cursor. Shimmy is designed to be invisible infrastructure that simplifies local AI development and deployment.

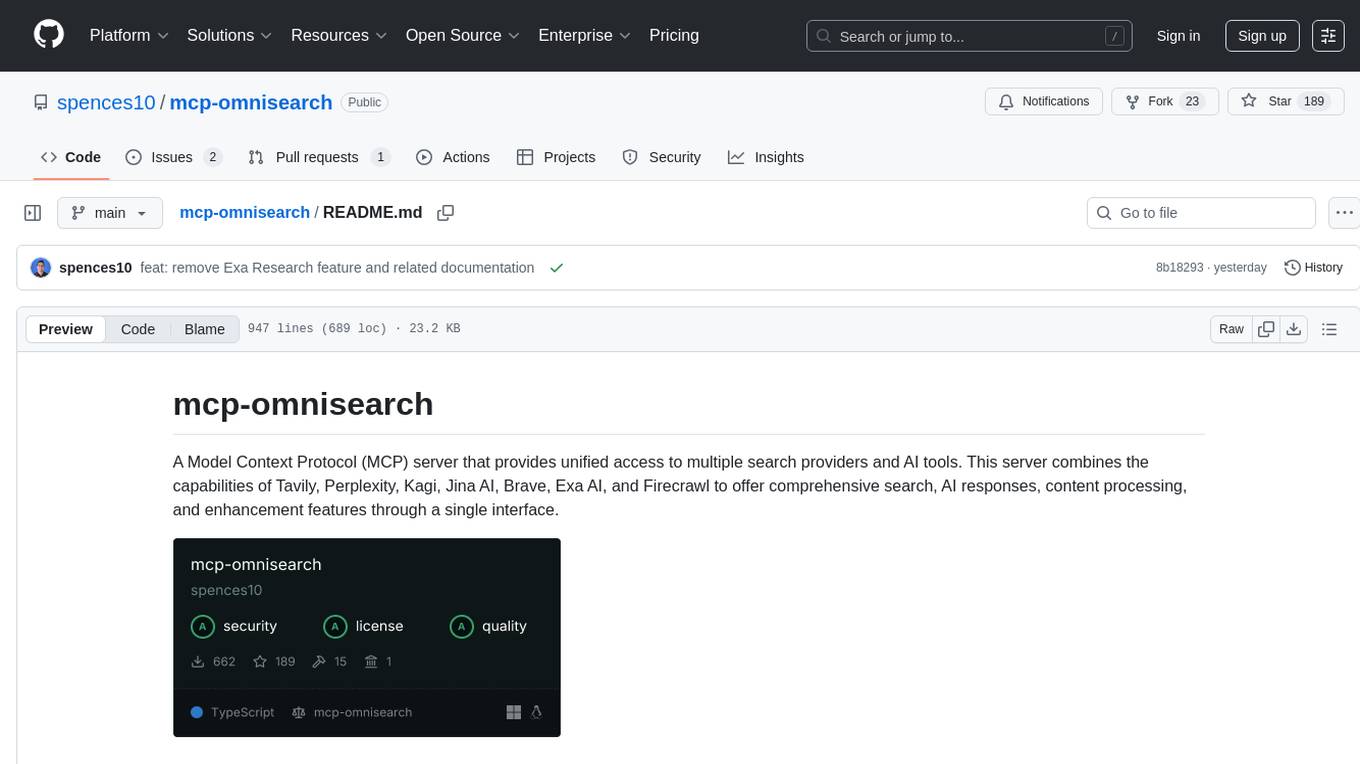

mcp-omnisearch

mcp-omnisearch is a Model Context Protocol (MCP) server that acts as a unified gateway to multiple search providers and AI tools. It integrates Tavily, Perplexity, Kagi, Jina AI, Brave, Exa AI, and Firecrawl to offer a wide range of search, AI response, content processing, and enhancement features through a single interface. The server provides powerful search capabilities, AI response generation, content extraction, summarization, web scraping, structured data extraction, and more. It is designed to work flexibly with the API keys available, enabling users to activate only the providers they have keys for and easily add more as needed.

aichat

Aichat is an AI-powered CLI chat and copilot tool that seamlessly integrates with over 10 leading AI platforms, providing a powerful combination of chat-based interaction, context-aware conversations, and AI-assisted shell capabilities, all within a customizable and user-friendly environment.

meeting-minutes

An open-source AI assistant for taking meeting notes that captures live meeting audio, transcribes it in real-time, and generates summaries while ensuring user privacy. Perfect for teams to focus on discussions while automatically capturing and organizing meeting content without external servers or complex infrastructure. Features include modern UI, real-time audio capture, speaker diarization, local processing for privacy, and more. The tool also offers a Rust-based implementation for better performance and native integration, with features like live transcription, speaker diarization, and a rich text editor for notes. Future plans include database connection for saving meeting minutes, improving summarization quality, and adding download options for meeting transcriptions and summaries. The backend supports multiple LLM providers through a unified interface, with configurations for Anthropic, Groq, and Ollama models. System architecture includes core components like audio capture service, transcription engine, LLM orchestrator, data services, and API layer. Prerequisites for setup include Node.js, Python, FFmpeg, and Rust. Development guidelines emphasize project structure, testing, documentation, type hints, and ESLint configuration. Contributions are welcome under the MIT License.